简介

k8s使用kubeadm进行安装步骤,使用kubeadm安装k8s会简单很多,一直想总结写一篇简单明了的安装教程,希望能有用。k8s在2020年初发布的第一个版本是1.18.0,目前最新版本是1.19.4,并且1.20的版本应该会在年底发布,但是我们这里安装的版本是1.18。

系统准备

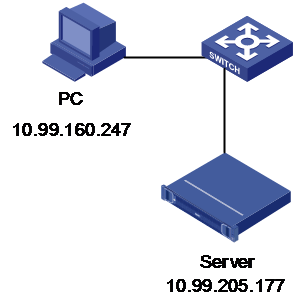

- 搭建虚拟机centos7环境,虚拟机固定IP地址

安装三台虚拟机,一台作master节点,两台作node节点

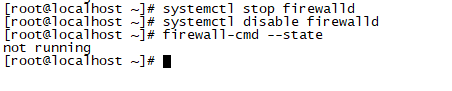

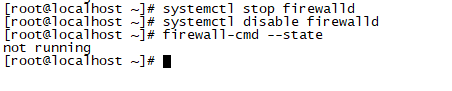

- 关闭防护墙

[root@master01 ~]

[root@master01 ~]

注:可以不关闭防火墙,但是要设置各个需要的端口的开放规则,比较麻烦,我们自己的开发环境就直接关了防火墙

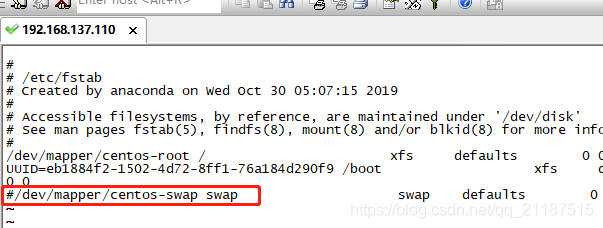

- 关闭swap

[root@master01 ~]

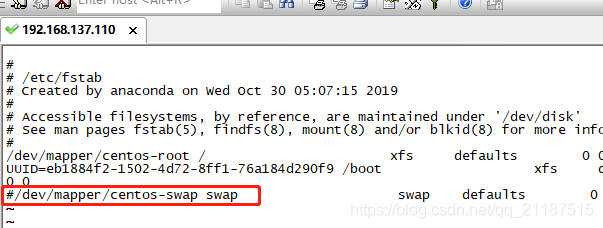

注释里面的"/dev/mapper/centos-swap swap"

注:

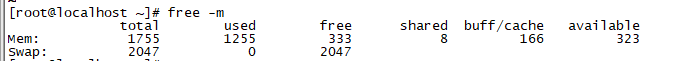

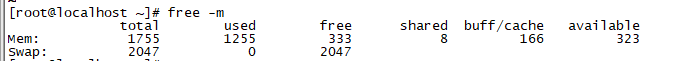

1.swap相当于“虚拟内存”。当物理内存不足时,拿出部分硬盘空间当SWAP分区(虚拟成内存)使用,从而解决内存容量不足的情况。

2.kubelet 在 1.8 版本以后强制要求 swap 必须关闭

3.free -m 命令可以查看交换区的空间大小,我们注释完再使用这个命令发现交换区swap还没关闭,因为需要重启才生效

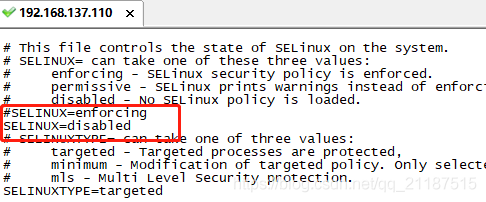

- 关闭selinux

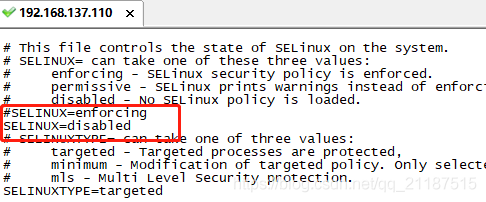

[root@master01 ~]

注释掉SELINUX=disabled,然后重启,reboot

注:

1.selinux这个是用来加强安全性的一个组件,挺复杂的,一般直接禁用

2.关闭selinux以允许容器访问宿主机的文件系统

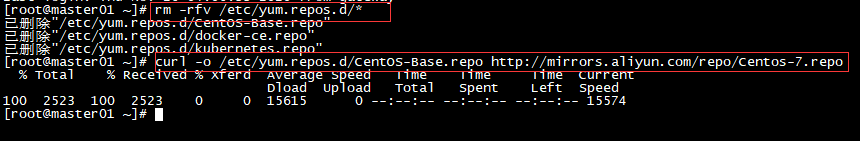

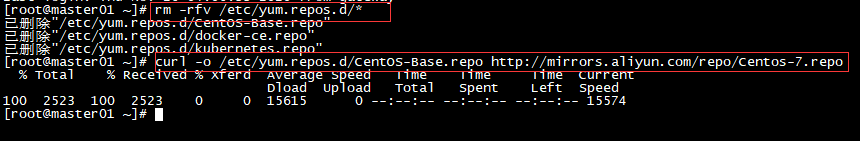

- 添加阿里源

[root@master01 ~]

[root@master01 ~]

注:如果是centos8,则链接最后面的Centos-7.repo修改成Centos-8.repo,否则使用yum安装的时候会提示没有可用的软件包

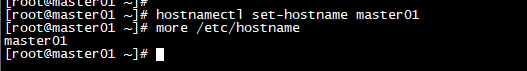

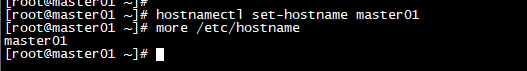

- 配置主机名

[root@master01 ~]

[root@master01 ~]

注:这一步如果少了的话,后面执行kubeadm join的时候会报错,提示有相同名称的节点,所有其他机器的hostname不能一样,工作节点可以写node01、node02这样子

配置内核参数,将桥接的IPv4流量传递到iptables的链

[root@master01 ~]

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

注:k8s该网络需要设置内核参数bridge-nf-call-iptables=1,没有这个后面添加网络的时候会报错。

安装常用包

[root@master01 ~]

使用aliyun源安装docker-ce

[root@master01 ~]

[root@master01 ~]

[root@master01 ~]

注:yum-config-manager命令配置aliyun源,但是这个命令来源于yum-utils,所以需要先安装yum-utils

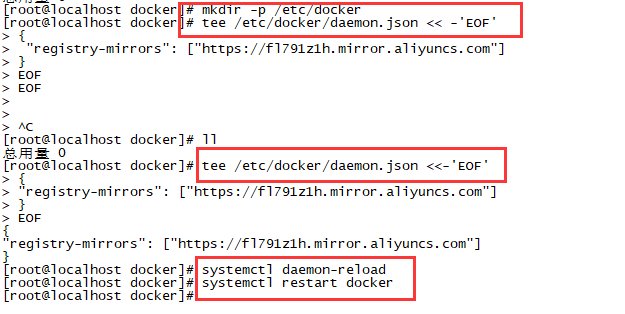

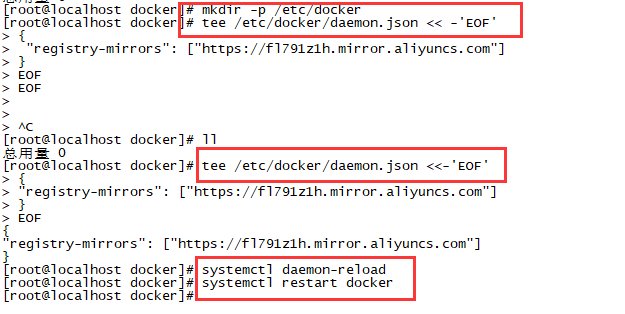

安装完docker后添加aliyun的docker仓库加速器

[root@master01 ~]

[root@master01 ~]

{

"registry-mirrors": ["https://fl791z1h.mirror.aliyuncs.com"]

}

EOF

[root@master01 ~]

[root@master01 ~]

注:tee /etc/docker/daemon.json <<-'EOF’后面的 <<-'EOF’是没有空格的,如图我第一次有空格输入EOF就没有结束

安装kubectl、kubelet、kubeadm

添加阿里kubernetes源

[root@master01 ~]

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

安装kubectl、kubelet、kubeadm

[root@master01 ~]

[root@master01 ~]

注: yum install kubectl kubelet kubeadm,这样写会默认安装最新版1.19.4,但是和我后面执行的kubeadm init --kubernetes-version=1.18.0版本不一致,会报错,所以我这里yum后面指定了版本

初始化k8s集群(master节点)

这一步开始区分master、ndoe节点执行的命令了,上面的步骤master、node都是一样

[root@master01 ~]# kubeadm init

集群初始化成功后返回如下信息:

W1125 22:47:32.274048 47607 configset.go:202] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io]

[init] Using Kubernetes version: v1.18.0

[preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at https://kubernetes.io/docs/setup/cri/

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [localhost.localdomain kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.10.0.1 192.168.137.110]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [localhost.localdomain localhost] and IPs [192.168.137.110 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [localhost.localdomain localhost] and IPs [192.168.137.110 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

W1125 22:47:35.941950 47607 manifests.go:225] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC"

[control-plane] Creating static Pod manifest for "kube-scheduler"

W1125 22:47:35.943106 47607 manifests.go:225] the default kube-apiserver authorization-mode is "Node,RBAC"; using "Node,RBAC"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 21.504047 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.18" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see

记录后面的kubeadm join这段内容,此内容需要在其它节点加入Kubernetes集群时执行。

kubeadm join 192.168.137.110:6443

创建kubectl

[root@master01 ~]

[root@master01 ~]

[root@master01 ~]

注:

1.不配置$HOME/.kube/config的话,kubectl命令不可用,

2.node节点写法有点不一样,node节点的这行为:sudo cp -i /etc/kubernetes/kubelet.conf.conf $HOME/.kube/config

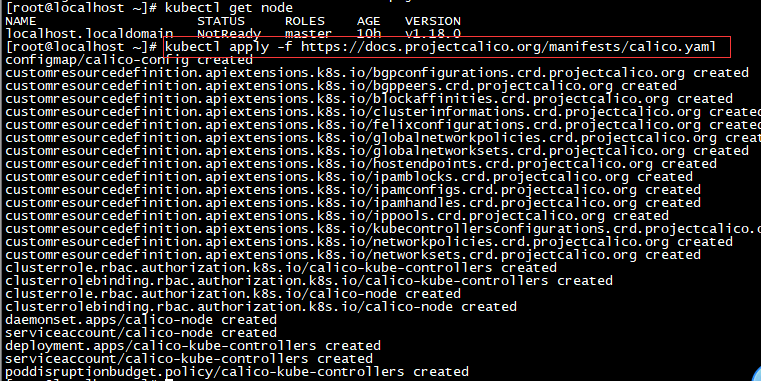

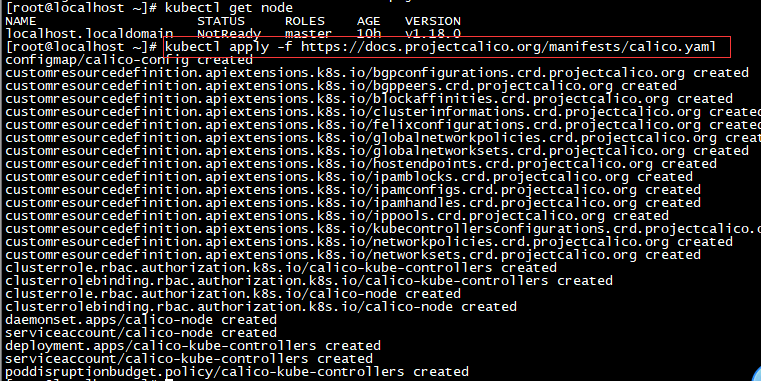

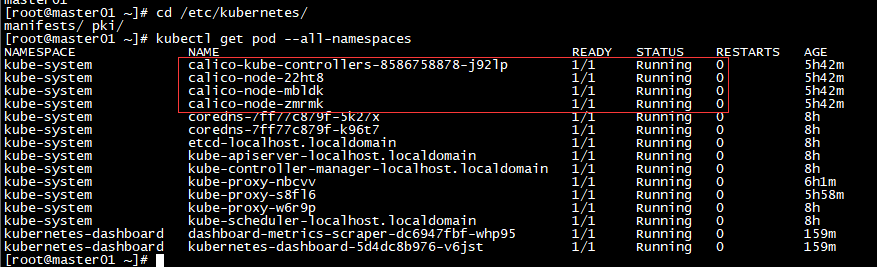

安装calico网络(master节点)

[root@master01 ~]

https://docs.projectcalico.org/manifests/calico.yaml

在这

注: 安装calico网络网络后过一会再输入kubectl get node,可以看到节点的STATUS由NotReady变为Ready

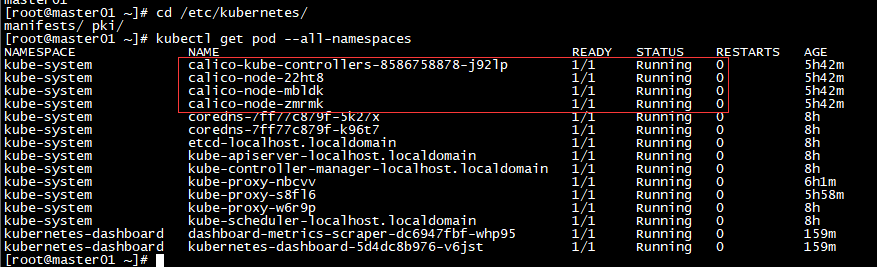

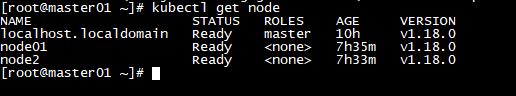

node节点加入集群(node节点)

[root@node01 ~]# kubeadm join 192.168.137.110:6443

注:

1.kubeadm init后得到的token有效期为24小时,过期后需要重新创建token,执行:kubeadm token create获取新token

2.kubeadm token list 查看token列表,

创建kubectl

[root@master01 ~]

[root@master01 ~]

[root@master01 ~]

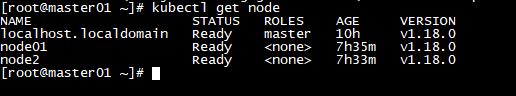

到了这步,把所有的node节点加入mater节点后,k8s的环境已经安装完成了

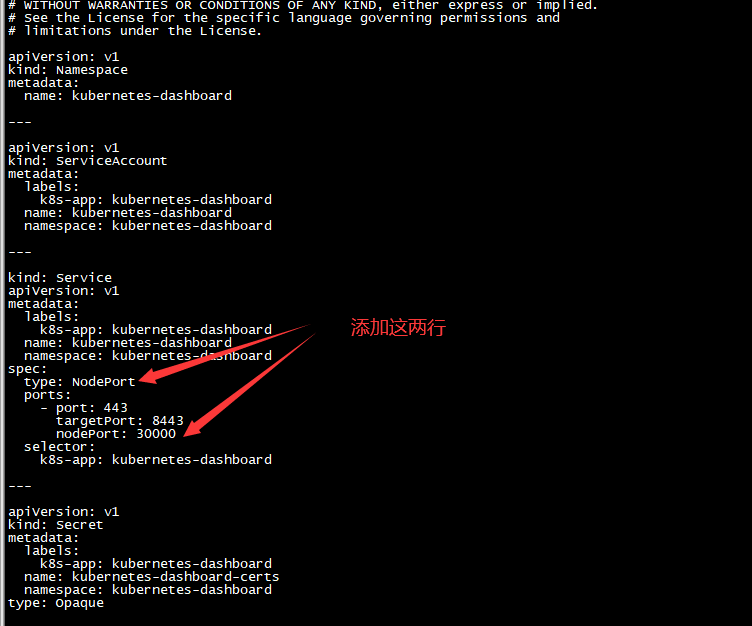

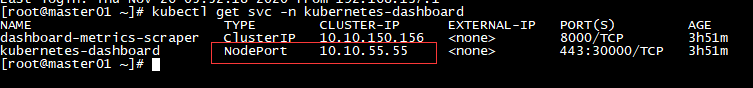

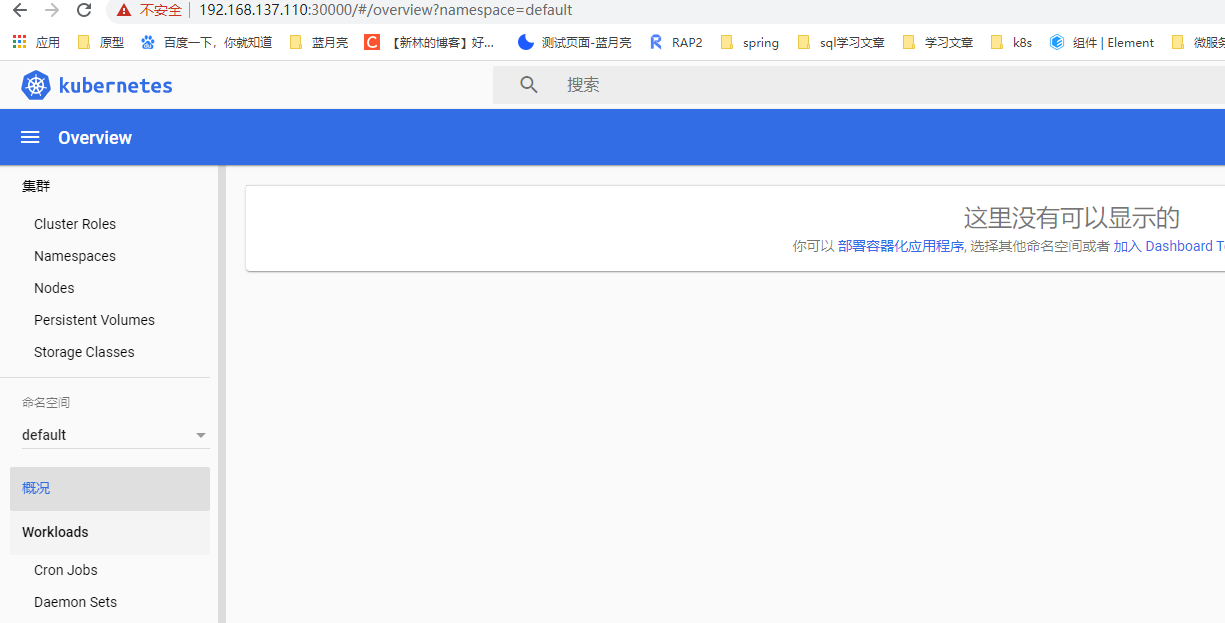

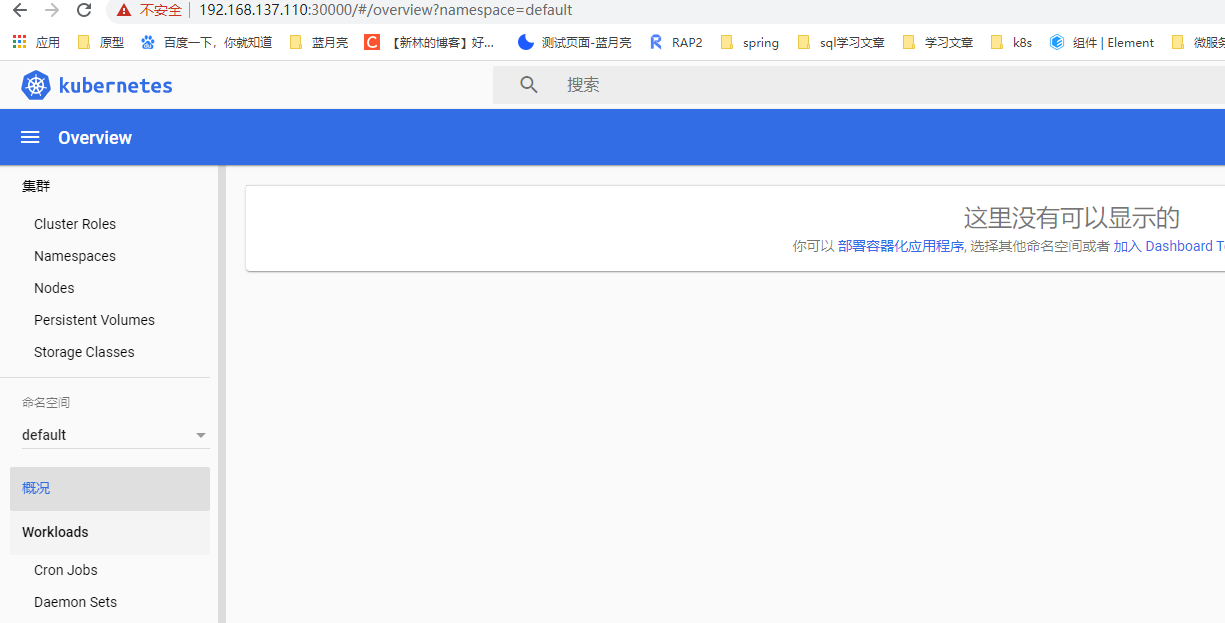

安装kubernetes-dashboard(master节点)

Dashboard是可视化插件,它可以给用户提供一个可视化的 Web 界面来查看当前集群的各种信息。用户可以用 Kubernetes Dashboard 部署容器化的应用、监控应用的状态、执行故障排查任务以及管理 Kubernetes 各种资源。

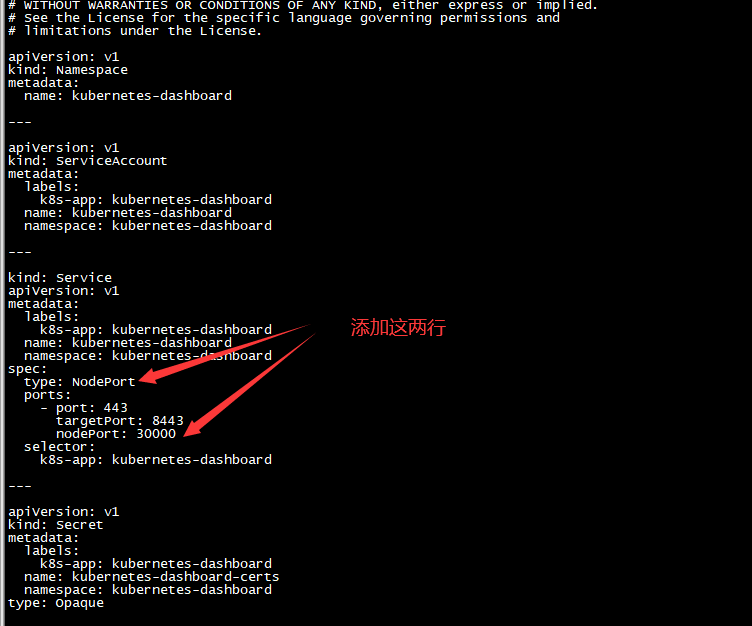

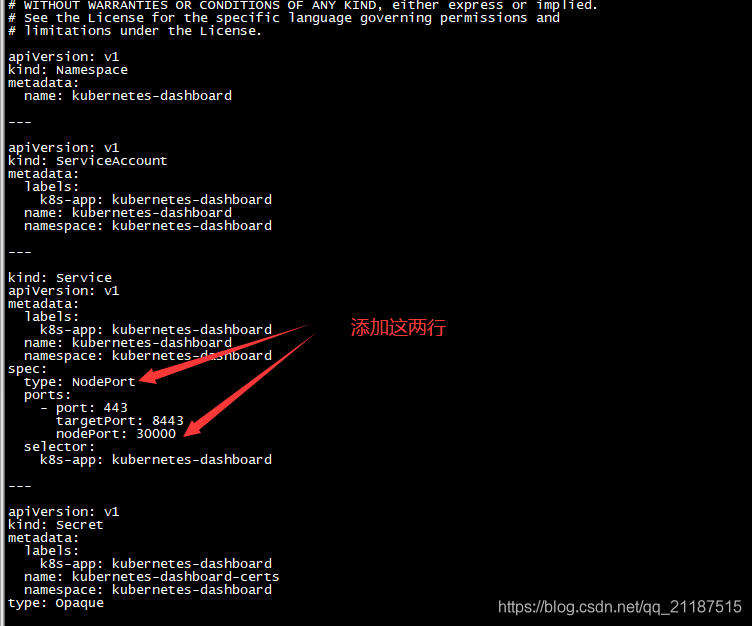

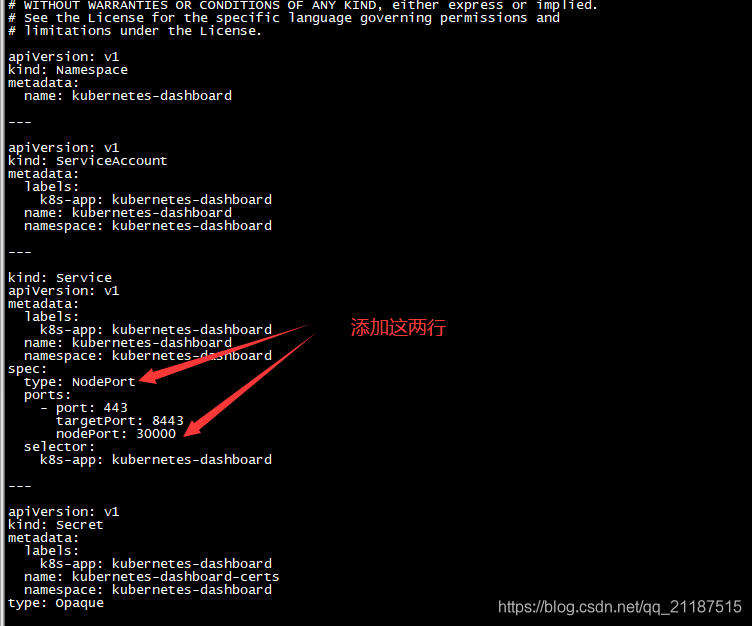

- 官方部署dashboard的服务没使用nodeport,将yaml文件下载到本地,在service里添加nodeport

[root@master01 ~]

[root@master01 ~]

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

type: NodePort

ports:

- port: 443

targetPort: 8443

nodePort: 30000

selector:

k8s-app: kubernetes-dashboard

[root@master01 ~]

注:这一步很有可能会报错,提示连接拒绝,后面的异常问题记录有详解,是网络原因

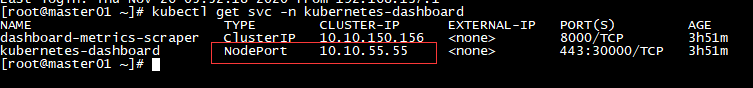

- 执行kubectl create -f recommended.yaml命令后,再执行下面这行,可以看到dashboard已启动

[root@master01 ~]

- 在浏览器输入我们的这台机器的ip+端口,进入登录页面

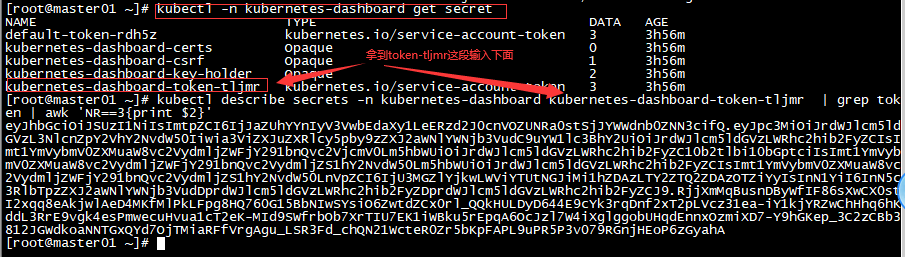

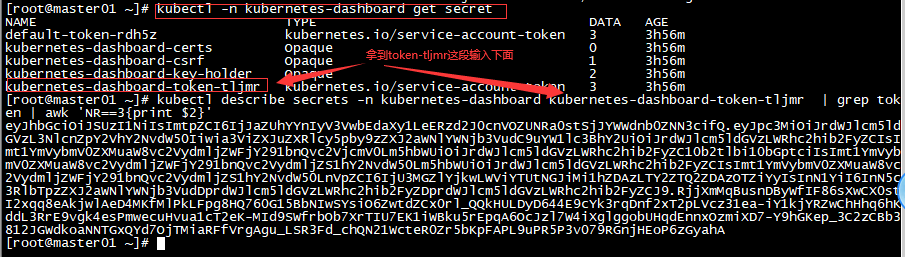

- 获取token

kubectl -n kubernetes-dashboard get secret

kubectl describe secrets -n kubernetes-dashboard kubernetes-dashboard-token-tljmr | grep token | awk 'NR==3{print $2}'

注:kubectl -n kubernetes-dashboard get secret 查看凭证

- 把token粘贴到登录页面上的输入token框,点击登录

安装过程的异常问题记录

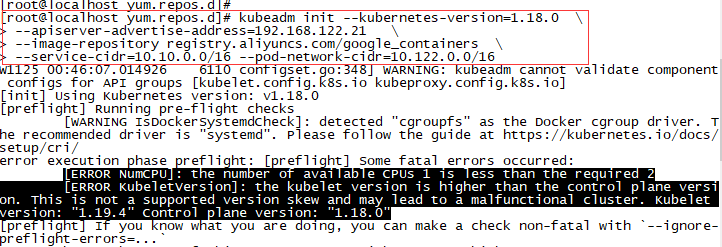

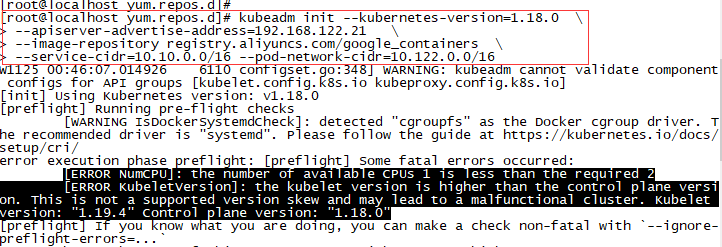

- kubeadm init 报错

1)[ERROR NumCPU] thee number of avaliable CPUs 1 is less than the requred 2

2)[ERROR KubeltVersion] the kubelet versionijs higher than the control plane version…kubelet version :“1.19.4” Control plane version :“1.18.0”

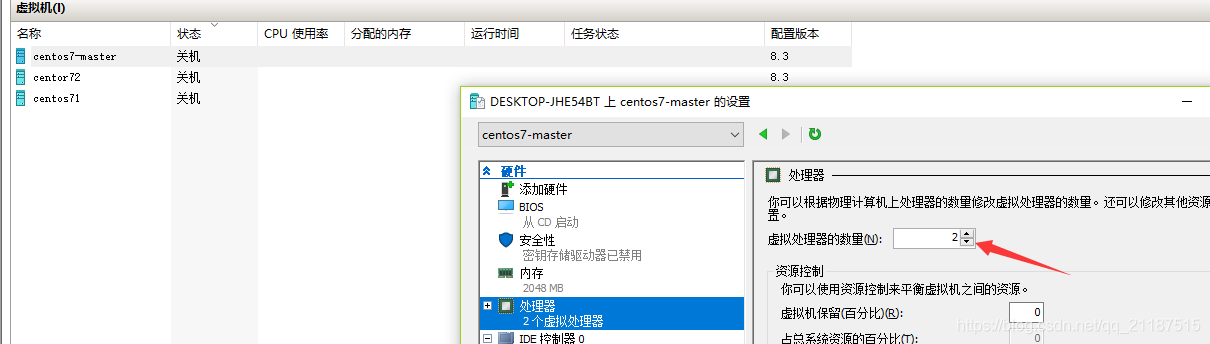

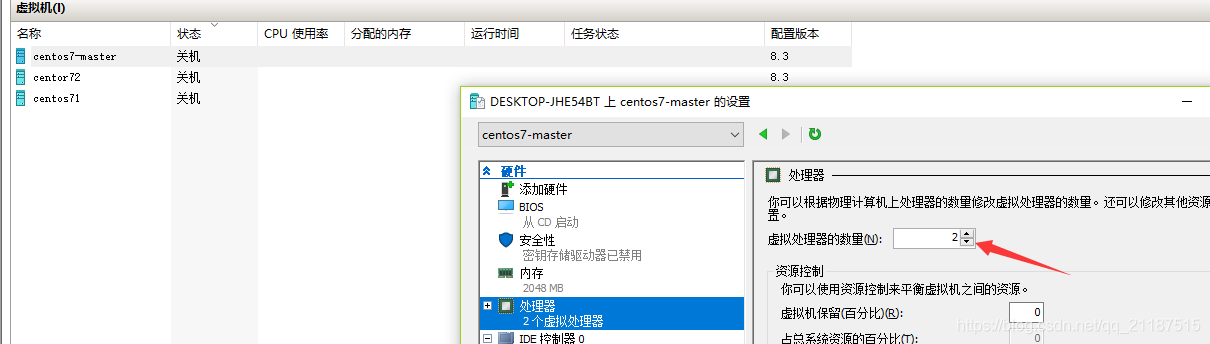

第一个问题的错误[ERROR NumCPU] 是由于cpu的核数只有1个,k8s最低配置需要2个,物理机或者虚拟机不满足Kubernetes的基础配置造成的,而Kubernetes对GPU要求至少是2核,2G内存。解决方案:修改虚拟机的CPU核数

- 第二个错[ERROR KubeltVersion]是指版本不一致,是由于我一开始执行yum install kubelet kubeadm kubectl没有指定版本(默认最新1.19.4),但是我在kubeadm init 指定了1.18.0导致的,解决方案:yum remove kubelet kubeadm kubectl,然后重新安装,yum -y install kubelet-1.18.0 kubeadm-1.18.0 kubectl-1.18.0

2.kubeadm join报错

1)[ERROR FileAvailable–etc-kubernetes-pki-ca.crt]: /etc/kubernetes/pki/ca.crt

这个问题是因为我这台机器之前安装过k8s,执行kubeadm reset重置即可

2)You must delete the existing Node or change the name of this new joining

这个错误是说集群已经有相同名称的节点,必须删除同名或改变节点名称重新加入,解决方案: hostnamectl set-hostname node01 修改主机名称后重新执行kubeadm join

3)[ERROR FileContent–proc-sys-net-bridge-bridge-nf-call-iptables]: /proc/sys/

解决方案:执行这行命令即可 echo “1” >/proc/sys/net/bridge/bridge-nf-call-iptables

4)token过期

kubeadm init后得到的token有效期为24小时,过期后需要重新创建token,执行: kubeadm token create

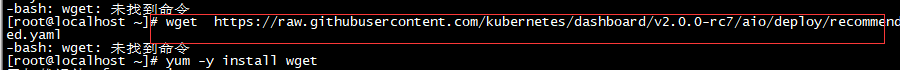

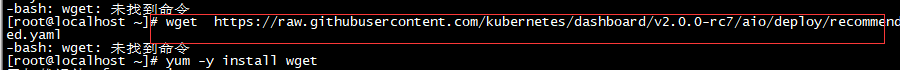

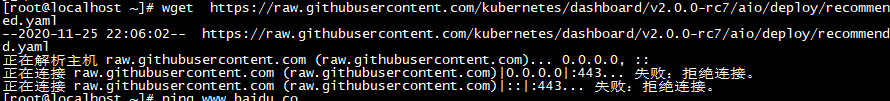

3.安装kubernetes-dashboard时遇到的错误

1)没有wget命令,安装wget命令接口 yum install wget

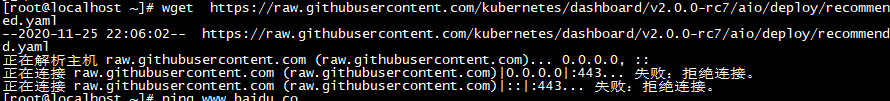

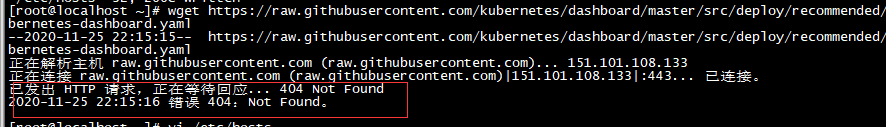

2)拒绝连接 wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.0.0-rc7/aio/deploy/recommended.yaml

进入网站:https://site.ip138.com/raw.Githubusercontent.com/

输入 raw.githubusercontent.com

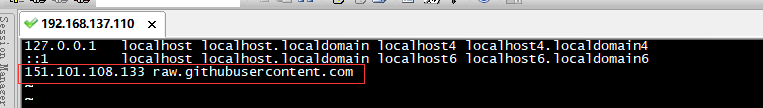

查询其相关的IP地址,然后把ip写进hosts里面

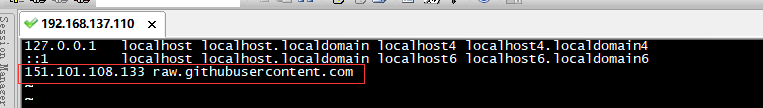

在vi /etc/hosts里面增加

151.101.108.133 raw.githubusercontent.com

错误参考:raw.githubusercontent.com:443连接失败的问题

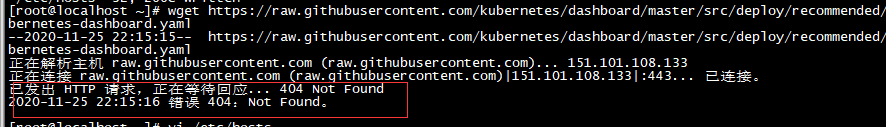

3)上一步配置hosts后还是报错 错误404:Not Found

解决方案:多重复尝试几次即可或者过一会再试

4)执行kubectl create -f recommended.yaml报错

4)执行kubectl create -f recommended.yaml报错

有两个原因,第一个是我一开始少加了type: NodePort这一行,第二个是空格其他的格式错误

解决方案:修改正确的recommended.yaml后,执行

解决方案:修改正确的recommended.yaml后,执行

kubectl delete -f recommended.yaml

kubectl create -f recommended.yaml

4.安装docker-ce报错,提示已有包

是因为我之前已经有安装过docker版本,但是我的版本是13.0的,现在想升级到19,卸载之前的版本 yum remove docker,然后执行yum -y install docker-ce,但是还是报错,提示docker.xxx.xx的包已存在,再把这个包卸载 yum remove docker.xxx.xx,然后再执行yum -y install docker-ce即可

4)执行kubectl create -f recommended.yaml报错

4)执行kubectl create -f recommended.yaml报错 解决方案:修改正确的recommended.yaml后,执行

解决方案:修改正确的recommended.yaml后,执行